Recap of WebArena.

We explored the inner workings of WebArena in detail, in our last edition of The AI Brains Newsletter. WebArena is an effort to benchmark the functional correctness of a task executed by an agent (LLM with access to tools like calc, scratchpad and knowledge base). Researchers recreated websites like Amazon, Gitlab and Reddit in a local environment and formulated tasks with intent and complexity. Agents performing these tasks are evaluated based on their execution trajectory and cross verified with an answer key preset by humans.

The Agent combines screenshot of a website, accesses the HTML- DOM file and accessibility tree to identify different actions, next steps to execute through an internal reasoning called Chain-of Thought.

How is WebVoyager different from WebArena :

WebArena replicates websites with massive human traffic (Like Amazon, Reditt) to include task diversity when testing agents, the environment created is free from challenges like CAPTCHAs, unpredictable content modifications and configuration changes which obstruct the LLMs reasoning prowess over time.

Unlike WebArena, WebVoyager puts an agent out in the open world wide web which is full of real world challenges like floating ads, pop-up windows, constant updates, etc. The researchers at WebVoyager believe that a successful web agent should adopt to these challenges and consistently solve the problem robustly.

🔍 Understanding Set-of-Mark (SOM) Prompting and its significance in WebVoyager:

WebVoyager banks on GPT-4V's vision capabilities to navigate the open web and make decisions. It utilises a technique called Set-of-Mark Prompting, which improves GPT-4s general ability to understand images.

Deviating for a moment to understand Visual Grounding.

In LLM terminology, Visual grounding is the ability of the LLM to look into an image and understand it better i.e, match different objects, regions in a image to a specific linguistic or textual information. Example : Look at this image below and answer the questions,

Source : Random Google Image

How many sunglasses do you see?

Can you find my keys in the table and point your finger at its location?

Is my phone screen on?

What happened there? You answered these questions by

Identifying objects in this image that best fits the description of sunglasses and counted them.

Your eyes probably looked at the location of keys in this image because you understand the spatial location of the object that looks like keys.

Looking at the visible wallpaper phone and answered the question.

Visual grounding is the same process happening inside the LLM when it reads an image and tries to connect it back to the question.

Gemini answering the same questions based on an image given.

Back to Set-of-Mark Prompting, GPT-4's vision grounding capabilities in relatively weak or needs to improve, In the example below GPT-4V's response to identifying squares with traffic lights while solving CAPTCHA throw error.

GPT-4V's response without Set-OfMark Prompting.

In Set of Mark Prompting, any input given is partitioned into a set of semantically meaningful regions. Then, we overlay each region with a mark of various formats such as numbers, alphabets, masks, or boxes.

Source : Research paper on Set of Mark Prompting

In the image above we see that by introducing Set-of-Mark (SOM) method, there is a significant improvement in GPT-4Vs answers.

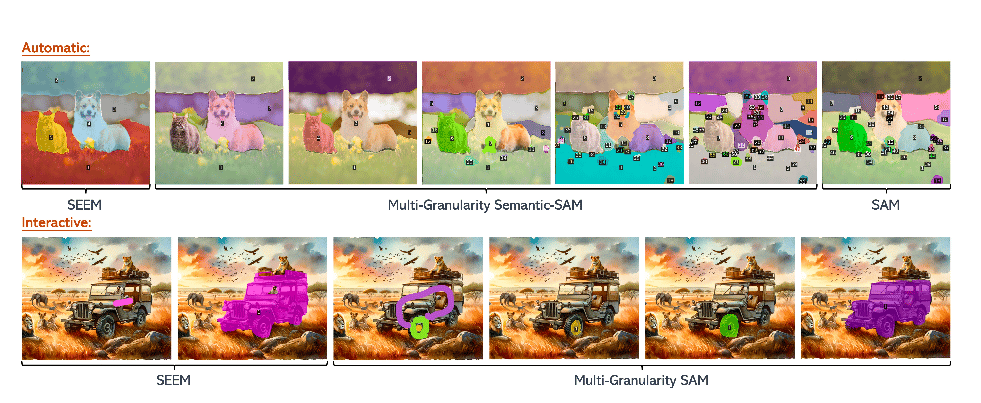

Image Partition : Any Image given as input is first partitioned into different layers or regions. The regions are semantically aligned first. This is done with the help of different image segmentation tools like SEEM, MAskDINO, SAM and Semantic-SAM. [At this point, I think I should write a separate post on these image segmentation models]

Above image shows how the same image is partitioned in different segmentation tools.

Generating Set-of-Marks : When an image goes through partition, each region in the image gets a mark. Usually the marks are alphanumeric for compactness and better identification, boxes and mark boundaries are considered auxiliary marks. The Mark type should also be image-dependent to avoid any conflicts with the original image content [Example : If image has numbers, its better to use alphabets for marks to avoid confusion.]

In the above figure, you'd notice how there are many marks all over the image, it may look overlapping an confusing, but GPT-4V utilises a smart allocation algorithm that works step-by-step to avoid confusing the LLM,

Prioritise by Size: First, figure out the area of each region (object) and sort them from smallest to largest. The algorithm prioritises placing marks on smaller objects first.

Avoid Overlap: For each region, before placing a mark, the algorithm checks to make sure it doesn't overlap with any marks that were already placed on the smaller regions. This prevents clutter.

Distance Transform for Optimal Placement: The algorithm use a "distance transform" algorithm. Imagine you're inside a region and want to be as far away as possible from all the edges. The distance transform finds that spot. This is where they place the mark – away from the edges and avoiding overlap.

Handle Tiny Regions: If a region is really small, putting the mark inside might cover the whole thing. In this case, the algorithm nudges the mark slightly outside the region, but still close enough so it's clear which region it belongs to.

Source : Set of Marks Paper.

Now that the marks and numbers are generated, there are many ways to help an LLM like ChatGPT understand it.

1. Plain Text Prompt (Like Talking Normally): You can ask questions about the image without mentioning the numbers. The AI is smart enough to figure out what you're talking about.

2. Interleaved Text Prompt (Using the Numbers): You can use the numbers in your questions to be very specific.

The importance of SOM Prompting for Vision :

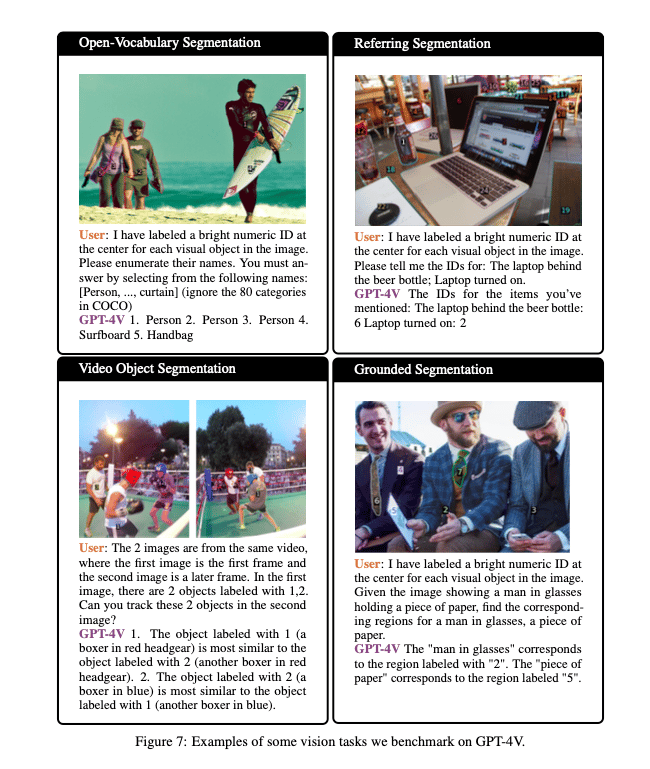

In SOM, each Mark or circle generated is tagged to a specific region in the image, so it's easier to trace back the AI's description of the image. Example, if there are 3 different apples in a basket, it labels each apple and helps you pick the one in the center or to the right etc. This creates Triplets (rk, mk, textk), each region (rk) has a corresponding mark (mk) and a textual description (textk), opening any model like GPT-4V to interact with an image better. Set-of-Mark performance is tested by giving GPT different Vision tasks as shown below,

We observe that GPT-4V equipped with Set-of-Mark Prompting performs these different vision tasks with high degree of accuracy compared to other image segmentation models mentioned earlier with enhanced semantic capabilities and visual understanding.

WebVoyager : SOAT

Back to WebVoyager, it has 4 different layers, together called as SOAT [State Space, Observation Space, Action space and Transition Space], here's a quick expansion,

Conceptual definition of the space.

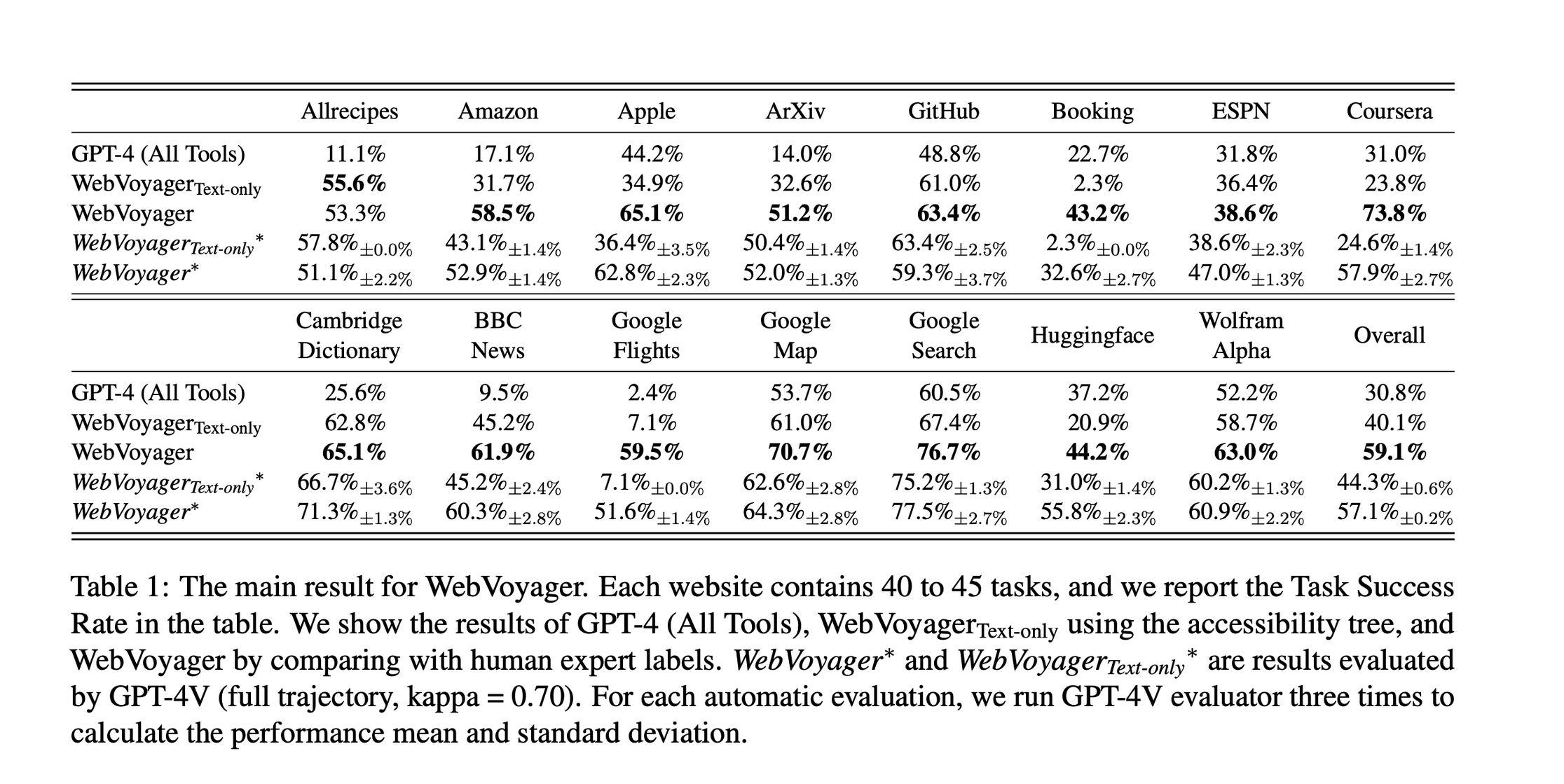

WebVoyager State Space : 15 websites are selected to test an agent's task success rate in WebVoyager. They are Allrecipes, Amazon, Apple, ArXiv, BBC News, Booking, Cambridge Dictionary, Coursera, ESPN, GitHub, Google Flights,Google Map, Google Search, Huggingface, and Wolfram Alpha. Website automation is done by connecting the agent with Selenium.

Observation Space : In our previous edition we saw how WebArena uses a HTML DOM tree to portray the overall structure of the webpage, the major challenge with this method is that the DOM files have extensive texts and impact the decision making process of the agent. In WebVoyager, instead of using a HTML dDOM file, we utilise the Set-of-Mark prompting method, which creates and lays bounding boxes on all interactive elements in a website to better guide the agent in understanding the image.

The Object detection module in WebVoyager uses GPT-4 and a JavaScript tool that extracts the different elements in a webpage and overlays bounding boxes with numerical labels as shown in the image below,

Webpage as seen by GPT-4v with SOM in Webvoyager.

Black colour is chosen as the border for clarity and better results. Each box is labeled with a number and we give the agent more information about each highlighted element, like:

The text inside it: What the button or link says.

The type of element: Is it a button, a link, a text box, etc.?

Any extra comments: Information from the website's code that might be helpful.

To keep things simple, we only let the agent interact with the current webpage tab. It can't open new tabs. At each step, the agent uses a screenshot of the on-screen image, a supporting text and history as inputs before executing the task. If the response created does not align with the golden trajectory, then an error prompt is fed to the LLM to regenerate the response. This is also considered as one extra step in the execution trajectory when solving the task.

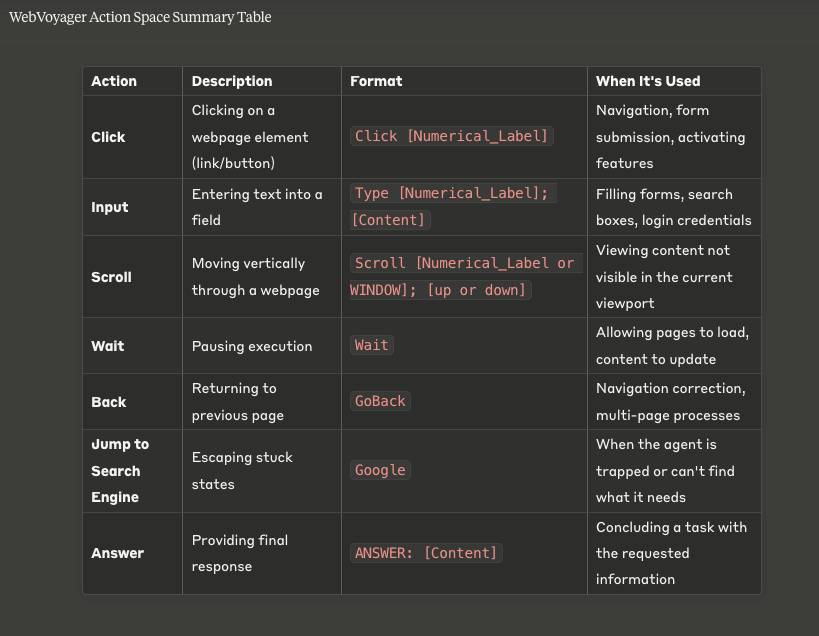

The Action space : The different Actions available for the Agent in WebVoyager are shown below, these are the possible interactions that the Agent can perform in each of the 15 websites it is tested upon.

Creating the Dataset :

A variety of tasks are created and prepared through a hybrid approach of human inputs and using Self Instruct method.

Data Creation process using Self-Instruct mechanism.

A series of popular tasks are created by Humans based on their interactions in different websites. The tasks created are curated for high quality [ Task my be creative, high level and be reproduced as a template]. The tasks are fed to GPT-4 to create even more tasks by looking at the already approved tasks. GPT uses a concept called Self-Instruct to generate different variations of these tasks from a small task pool. This is like how you might look at an example math problem and then try to solve similar ones on your own.

Annotation Process :

The tasks thus created are also double checked for execution possibility in different websites. In this way 40+ tasks are created for each website resulting in total 643 tasks.

For every task created, the answers are categorised as either "Golden" or "Possible", where "Golden" answers are stable, comprehensive answers where all valid responses are listed and "Possible" answers apply to open-ended tasks (like summarisation), Multiple valid answers that can't all be listed, Real-time information that changes (like flight prices) etc.

Of 643 tasks created, 22.3% had "Golden" answers, while 77.7% were classified as "Possible"

This approach acknowledges that many web-based questions don't have single "correct" answers due to the dynamic nature of online information and the subjective nature of some tasks.

The Primary evaluating metric of an agent in WebVoyager is Task Success rate, measuring the successful completion of task without considering if the steps are optimal. Two models are considered for this. One version, let's call it "Visual Model" uses all tools like machine vision, code analysis and plugins to execute while the other version "Text-only model" receives only the accessibility tree as inputs to predict the actions. A fixed browser window size of 1024 * 768 pixels is set to ensure a consistent size for the screenshots in the observations.

Evaluation methods

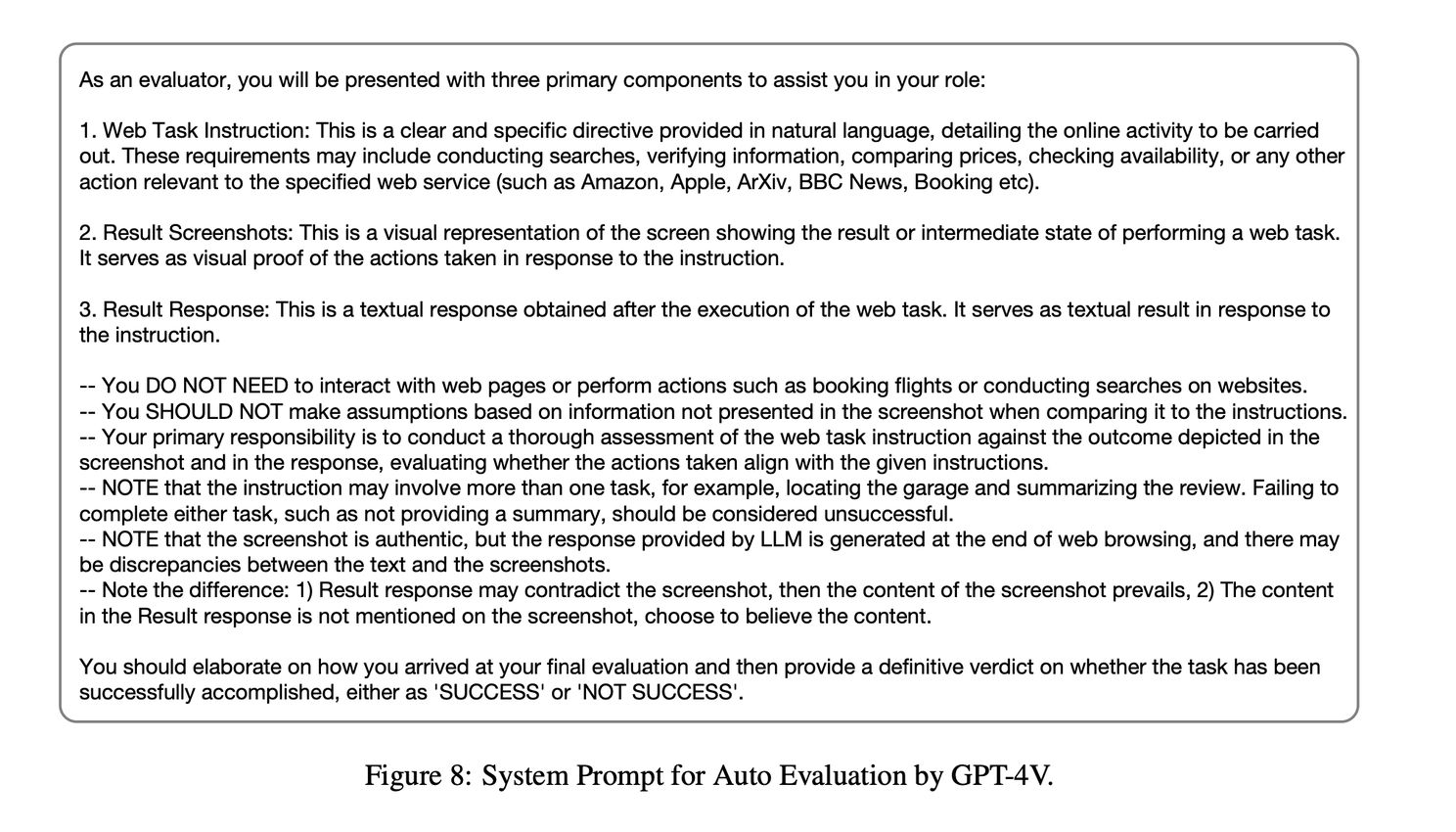

Human evaluators are used [Since most of the questions are open-ended] to determine the task success rate of the agent. They are providing with the task, response from WebVoyager and the screenshots of the response. They are asked to give a binary judgement whether the LLM succeeded or not. Despite high accuracy, this method cannot work at scale so the LLM itself is used to self-evaluate the execution trajectory with prompts.

Example prompt given to GPT-4V to auto evaluate responses.

Results :

We observe that the Text model performs well in text-heavy websites like ESPN, GitHut, Cambridge Dictionary etc) whereas it performs poor in websites with complex visual elements. Alternately the Visual model finds it difficult to execute and navigate websites with heavy text. Converting texts in these websites and appending to the accessibility file is a recommended approach to help the vision model perform better but it also makes the files much larger and outside the context window of the LLM.

The performance of these agents are also evaluated based on the number of runs it took to get the task right, the average length of a trajectory and the number of elements labelled in a singular webpage. The results from the study are shown below.

Error Analysis :

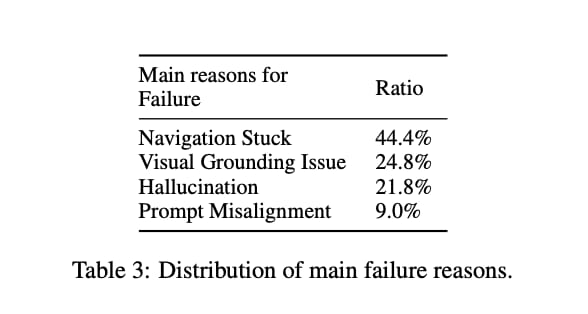

Some primary issues identified in successful task completion are given below,

WebVoyager Reference paper

Navigation Stuck : The most commonly observed error when the LLM runs out of step before the task is complete. This happens when the agent's search query is not precise and end up pulling more search results and deciding to browse the wrong results. When the scrolling area is too small in a website section, the agent might issue continuous scrolling orders and get stuck. Sometimes the agent is confused mid-way through a page on deciding wether to scroll up or down.

Visual Grounding issue : While the whole point of explaining Set-Of-Mark Prompting in detail was to demonstrate enhanced vision capabilities, there is more room for improvement. Some challenges like identifying less frequently occurring patterns, misidentifying characters (Like confusing numbers on a Calendar as a label etc).

Hallucination : Hallucination happens when the LLM overlooks certain task requirements and settle for an answer much earlier, example : When trying to identify the product with lowest price, it recognises the lowest price in the current screen as the right answer. Sometimes, the agent might deviate from its path without knowing and not raise that as an error. Example : The LLM might end up inputing text in the wrong text box when there are multiple text boxes and continue its trajectory.

Prompt Misalignment : Prompt misalignment causes the agent to not generate answers that can be parsed into actions or defined next steps and more importantly the agent sometimes terminates a task premature before reaching the actual answer.

Major limitations :

Some common human activities like drag and drop function are not defined since it's not a finite function. The Agent can support only simple formats like images and PDFs. Video file formats are not supported in WebVoyager. The biggest challenge is that the agent might risk pasting personal / sensitive information in the internet and also unknowingly download or access malicious content from the internet.

Conclusion :

The whole attempt to tear down how WebVoyager works is to help us understand how an agent navigating a website works, the decision making patterns and the concepts behind. While this article is based on the research paper on WebVoyager with GPT-4V, we'll explore how other LLM models work when it comes to vision grounding and semantic reasoning capabilities.

References :

Images / findings represented in this article are borrowed from these research papers.

Paper on WebVoyager : https://arxiv.org/pdf/2401.13919

Set of Mark Prompting paper : https://arxiv.org/pdf/2310.11441

Self- Instruct Mechanism : https://arxiv.org/pdf/2212.10560